When dynamic maps were in early stages of development or even concepts, I was thinking on ‘replay’ function – sort of step-sequencing based on certain dimension. Some of these ideas have been presented in the “Incident Prototype” in 2014 here at 2:24 where you can see sequencing over the day or month period.

When dynamic maps were in early stages of development or even concepts, I was thinking on ‘replay’ function – sort of step-sequencing based on certain dimension. Some of these ideas have been presented in the “Incident Prototype” in 2014 here at 2:24 where you can see sequencing over the day or month period.

Another demonstration took place in Green Space Analyzer in 2015 when introducing Smart M.Apps at 2:04 when years are sequenced.

Important part of the idea was the editing functionality – or how the sequence and capture ‘user motion’ in general. In multidimensional data there are nearly unlimited number of combinations and ways how data are filtered over the time. However instead of continuous time sequencing, we can simplify to ‘step-sequencing’ that is well known in music industry – many digital instruments offer this, specially rhythm, drum or groove machines. Geospatial industry could take a lot from looking on how this function, core to music production, is done there and get inspiration for smart mapping.

For example Korg Minilogue xd captures ‘user motion’ of the filters that can be defined for each of the 16 steps

Why it is important – imagine story telling where someone will show how to filter out data to get certain interesting results – he will then save his choices of filters over the time (in form of motion sequence) so other people can load it and ‘replay’ it and further tweak it.

I believe this might be super-useful for show-case of evolving of some phenomena, or how someone (aka expert) got into certain results.

Or it might be useful as ‘Smart Video’ of maps – so instead of publishing dump video – you publish a dynamic map plus ‘motion sequence’ of when and what is filtered (including map manipulations like zooms)

Yeah this is like what everyone knows from Whether forecasts – replay or forecast of cloud/pressure/wind motion, however instead of video, you get real smart map – the one that is replaying its state based on the sequencer data.

State of state

Keeping current state of the filters modified by user is the first step.

While lot of user interaction already happens on the client side, most of the front -leading solutions do not keep a state of the selected filters so refreshing URL doesn’t keep what user has previously selected – in short all user UI state is lost. May be this is ‘feature’ to reset state not inconsistency, then you need to make another button that explicitly gives you link to the current state…

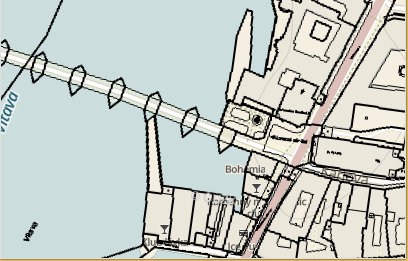

In iKatastr.cz I keep the state in URL – this makes it easy than to go forward/backward of what user has previously selected (here parcel). Moreover it enables direct link sharing and one button less in UI (for sharing link) – so what user sets in UI on the page is reflected in the URL as fragment (starting by #) so any time user can grab the URL and pass it by SMS or email – this plays well on iOS/Android with default ‘share url’ function of the browsers. So try this link: https://ikatastr.cz/#kde=49.31166,17.75353,18&mapa=zakladni&vrstvy=parcelybudovy&info=49.31142,17.75423

and try to refresh it – you will see the same state as before the refresh. You can also try to select other parcels and then press back button in the browser. You can experience back/forward ‘sequencing’ manual sequencing fo your previous user interactions. This is most likely the way to go and further evolve into full step-sequencing.

Recent months I have been programming “Green Space Analyzer” web app that shows modern approach to visualize and query multi temporal geospatial data. User see information in a form he can interact with and discover new patterns, phenomena or information just by very fast ‘feed-back’ of the UI response on the user input. When user selects for example certain area, all graphs instantly animates transition to reflect selection made, this helps to better understand dynamics of the change. Animation can be seen everywhere – from labels on bar chart, through colors change of the choropleth up to title summary. it creates subtle feeling of control or knowing what has changed and how it has changed. At HxGN 15 conference in hexagon geospatial keynote, CEO Mladen Stojic

Recent months I have been programming “Green Space Analyzer” web app that shows modern approach to visualize and query multi temporal geospatial data. User see information in a form he can interact with and discover new patterns, phenomena or information just by very fast ‘feed-back’ of the UI response on the user input. When user selects for example certain area, all graphs instantly animates transition to reflect selection made, this helps to better understand dynamics of the change. Animation can be seen everywhere – from labels on bar chart, through colors change of the choropleth up to title summary. it creates subtle feeling of control or knowing what has changed and how it has changed. At HxGN 15 conference in hexagon geospatial keynote, CEO Mladen Stojic

For this year HxGN14 conference I have prepared a web app of modern data vizualisation, I have got inspired by great ideas from

For this year HxGN14 conference I have prepared a web app of modern data vizualisation, I have got inspired by great ideas from