AI coding agents are useful. However I keep wondering whether trade-off is limitation in knowledge flow inside teams. A team can become faster at producing output while becoming worse at building shared understanding. And that shared understanding depends not only on what the team knows, but also on the social fabric through which knowledge moves : trust, explanation, mentorship, disagreement, and shared ownership. If that weakens, the long-term cost may be larger than we think. I think of it as the risk of “team knowledge depreciation”: trading long-term learning and collective understanding for short-term gains in speed.

This is, in some sense, a more grounded follow-up to my earlier , over-excited post “Developer Twin”.

1. AI may optimize for local speed while weakening global understanding. A patch gets written faster, but fewer people understand why it exists, which trade-offs were made, and what it may affect elsewhere. Individual throughput and collective intelligence are not the same thing.

2. The biggest risk may not be code quality, but knowledge flow. Teams do not work only because individuals are smart. They work because knowledge moves between people and gets recombined into a shared view of the system.

3. A team does not need everyone to understand everything. But it does need a healthy way to reconstruct the whole together. That shared reconstruction may become weaker if too much work shifts from human-to-human exchange to human-to-AI interaction.

4. Documentation can serialize rules, but not fully transmit judgment. Skills, playbooks, prompts, and agent instructions can capture explicit knowledge. They do not fully capture the painful experience from which engineering judgment is formed.

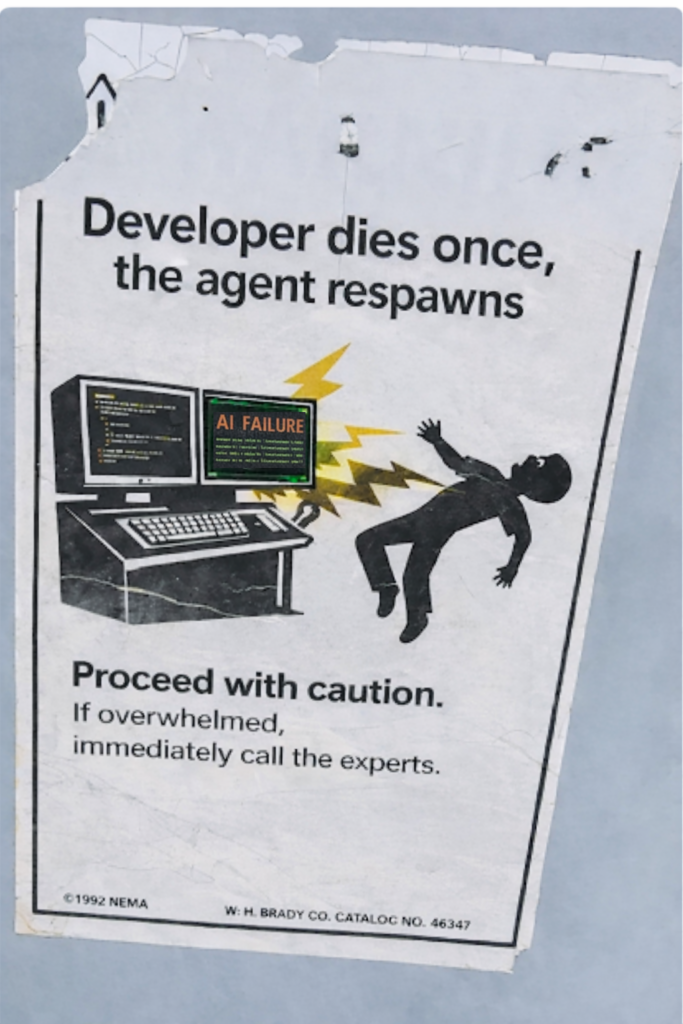

5. Mentoring a person compounds. Steering an AI often does not. When you invest in a junior engineer, the team may get that investment back over time. With current AI systems, much of that effort is spent in the moment.

6. Faster delivery can hide a weakening learning culture. A team may ship more while learning less. Over time, that can turn into a quiet form of knowledge capital depreciation: accumulated expertise is consumed faster than it is renewed. The deeper question is what kinds of team behavior AI quietly rewards, and what kinds it replaces.

7. The real challenge is not just building faster systems, but preserving the human mechanisms that make learning possible. Speed matters. But so do explanation, mentorship, disagreement, review, and shared ownership.

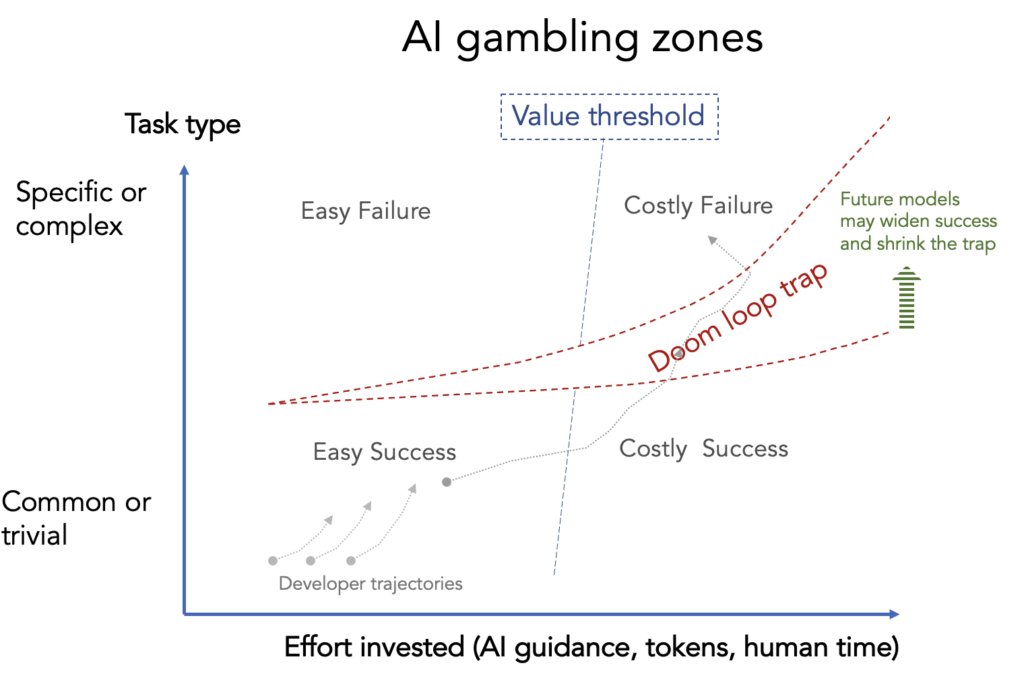

Updated on 31.3. – less words. Also added AI usefulness map here : https://blog.sumbera.com/2026/03/28/ai-usefulness/